- 14 July 2021

CS3MESH4EOSC just launched a demonstration video that explains how the different components of the Science Mesh federated platform work harmoniously between otherwise heterogeneous systems on different vendor platforms and data stores.

The Science Mesh platform was built on two main components:

- The IOP (Inter-Operability Platform): a software package, deployed on each of the sites, that enables interaction with other components of the Science Mesh. It guarantees compatibility of the use-cases across multiple vendor EFSS (Enterprise File Sync and Share) platforms and allows them to speak the common language of the CS3APIs, essential to the cross-integration of the various micro-services which constitute the platform;

- The "Central Service": a collection of different software solutions, acting as a lightweight management node for the Science Mesh, in order to enable monitoring and site registering. Through the Central Service, the user can register to get an API (Application Programming Interface) key which allows a node to effectively become part of the Mesh, as well as access monitoring dashboards. The “Central Service” doesn’t introduce any single point of failure, as the nodes will still be able to operate in its absence.

The demonstration video explains how to access and use the Science Mesh platform:

- How to join the Science Mesh platform (with the APIs);

- How users share files between them (with the invitation workflow);

- How to do real-time collaboration in documents;

- Data Science Environments (to facilitate collaborative research).

How to Join the Science Mesh platform?

As a first step, a node should start by installing the IOP in parallel with the Sync and Share platform. To connect the Sync and Share platform and register the node to the Science Mesh, the user must use one of the apps from the 3 general-purpose vendor platforms currently supported by the Mesh (Nextcloud, ownCloud and Seafile which will be available at a later date).

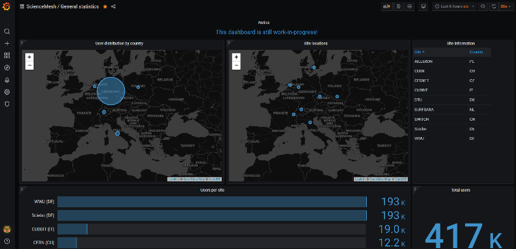

After the installation, several settings need to be defined, namely general information about the node (e.g., its name and URL), as well as various technical parameters (e.g. IOP address). This operation is followed by obtaining an API key that facilitates the communication between the node and the “Central Service”. The node is automatically displayed in the Science Mesh dashboard at the end of the process.

Figure 1 - Science Mesh node dashboard

An invitation workflow for file sharing between users

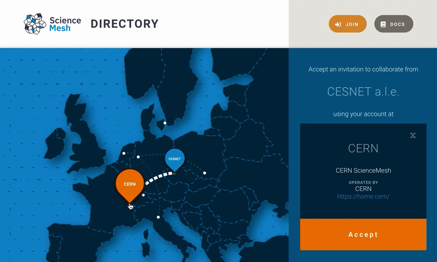

Once a site joins the mesh, its users can start sharing data with others. The video demonstration narrates how a user at one site can share data with another user from a different EFSS without prior knowledge of the target user's identity in the system while being compliant with General Data Protection Regulation (EU GDPR) and other privacy laws.

Figure 2 - Mesh Directory service invitation acceptance form

Allowing real-time collaboration in documents

Web-based applications which allow for concurrent editing by two or more users are becoming increasingly popular and are often seen as a more effective means of collaboration when compared to the traditional approach of sequential, “round-robin-style” editing of the same file by several users.

In the Science Mesh, once a data-sharing relationship has been established between users on different systems, it is possible to use collaborative applications to work on shared content in real-time. Several applications are being considered as part of the Science Mesh ecosystem, namely OnlyOffice, Collabora Online, Overleaf and the more lightweight CodiMD.

A Data Science Environment to facilitate collaborative research

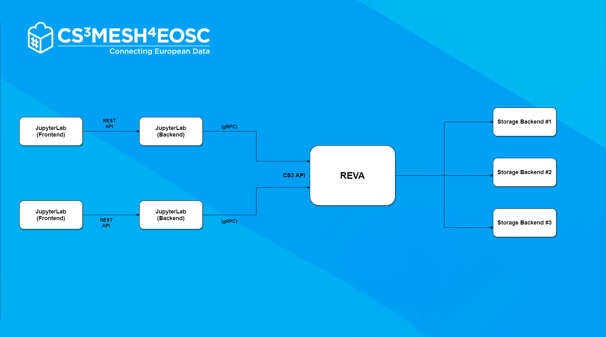

Data science environments integrated into the federated Science Mesh enable the cross-federation sharing of computational tools, algorithms and resources, where users can access remote execution environments to replay (and modify) analysis algorithms without the need for an account in the remote system. The video demonstration gives the example of the JupyterLab platform, integrated with REVA through CS3APIs.

Figure 3 - JupyterLab integrated with IOP/Reva

Future steps for the platform implementation

One of the main goals for the next few months will be the integration of the prototypes which were developed so far within the graphical user interface of EFSS services, to make them usable by regular users. Furthermore, the CS3MESH4EOSC project aims to conclude the integration of Seafile as the third vendor platform directly supported by the mesh.

The Science Mesh team will also analyse the main features of the Science Mesh platform for the use-cases that are being implemented in the project, which represent the ultimate test to confirm the potential of Science Mesh in facing data sharing challenges across Europe and beyond.

Watch the full Demonstration Video